Artificial intelligence is no longer experimental inside large organizations. It runs fraud detection engines, powers customer support automation, supports credit scoring, optimizes logistics, and drives predictive analytics. But here is the hard truth: deploying AI without structured AI Testing is a liability.

Enterprise AI systems do not fail loudly at first. They fail quietly — through biased outputs, model drift, security gaps, unstable performance, and compliance violations. That is why AI Testing has become a foundational discipline for scalable digital transformation.

At NKKTech Global, we treat AI which needs to test not as a final QA step, but as a continuous governance framework integrated throughout the AI lifecycle. Below are six critical AI model testing methods every enterprise must implement to ensure reliability, compliance, and long-term performance.

Why AI Testing Is Different from Traditional Software Testing

Traditional systems follow rule-based logic. AI systems learn from data. That difference changes everything.

With conventional software:

- Inputs produce predictable outputs.

- Edge cases are defined manually.

- Code logic is deterministic.

With AI systems:

- Outputs are probabilistic.

- Models evolve with retraining.

- Performance depends on data quality.

- Bias and drift can emerge over time.

Because of this complexity, AI Examination must go beyond functional testing. It must validate behavior, fairness, performance stability, and governance compliance.

Enterprises that underestimate AI going through often discover problems after deployment — when correction costs are significantly higher.

1. Functional and Intent Validation

The first layer of AI Testing focuses on functional correctness.

This answers the fundamental question:

Does the AI system do what it is supposed to do?

In enterprise environments, this includes:

- Validating intent recognition accuracy (for chatbots and voice systems)

- Testing prediction accuracy thresholds

- Confirming correct workflow triggers

- Ensuring system integrations operate properly

- Verifying escalation logic

For example, if an AI model predicts customer churn, AI Testing must confirm that:

- The output probability is within expected accuracy ranges.

- Alerts trigger correctly in CRM systems.

- False positives remain within acceptable tolerance.

At NKKTech Global, we build structured AI Testing matrices that simulate real enterprise scenarios — not just idealized inputs.

This stage ensures foundational reliability before deeper evaluations begin.

2. Data Quality and Bias Detection

Data is the fuel of AI. Poor data quality produces unreliable outputs.

One of the most critical AI model testing methods involves auditing datasets before and after model training.

Key validation steps include:

- Detecting missing or inconsistent values

- Identifying data imbalance

- Validating demographic representation

- Measuring label accuracy

- Monitoring bias indicators

For enterprise systems used in hiring, lending, or customer segmentation, bias detection is not optional. It is a compliance necessity.

Bias-related AI failures create legal, ethical, and reputational risks.

NKKTech Global integrates automated fairness dashboards into enterprise AI Testing pipelines to ensure ongoing monitoring.

AI systems must be accurate — but they must also be fair.

3. Performance and Stress

Enterprise AI systems operate at scale. They must perform reliably under pressure.

Performance-focused AI system testing evaluates:

- Response time under peak load

- Model inference latency

- Concurrent request handling

- API stability

- Failover behavior

For example:

A voice AI system serving 10 users may perform flawlessly. The same system serving 10,000 simultaneous interactions may degrade rapidly.

Without stress-based AI evaluation, enterprises risk downtime during critical business periods.

At NKKTech Global, we conduct simulation-based AI testing to replicate real-world traffic conditions across industries including finance, retail, and telecommunications.

Reliability under pressure defines enterprise readiness.

4. Model Drift and Continuous Monitoring

AI models degrade over time.

Customer behavior changes. Market conditions evolve. Language patterns shift. Fraud techniques adapt.

This phenomenon is known as model drift.

AI system testing must include:

- Drift detection algorithms

- Periodic accuracy re-evaluation

- Threshold-based retraining triggers

- Version comparison benchmarking

- Continuous performance logging

For example:

A credit risk model trained in 2022 may lose predictive power in 2025 due to macroeconomic changes.

Without drift-focused AI Testing, enterprises operate on outdated intelligence.

NKKTech Global implements continuous AI evaluation loops integrated with MLOps pipelines to maintain long-term system accuracy.

AI is not static. Testing cannot be static either.

5. Security and Adversarial

AI systems introduce unique cybersecurity vulnerabilities.

Adversarial attacks can:

- Manipulate model predictions

- Inject poisoned training data

- Exploit API endpoints

- Extract model information

Enterprise-grade AI evaluation must evaluate:

- Resistance to adversarial inputs

- Data pipeline security

- Access control frameworks

- Encryption protocols

- API authentication systems

For example:

A malicious actor could subtly alter input data to shift model outputs in fraud detection systems.

Security-focused AI model evaluation protects both infrastructure and intellectual property.

At NKKTech Global, AI security validation is embedded into system architecture — not treated as an afterthought.

6. Compliance and Explainability

In regulated industries, AI decisions must be explainable.

Compliance-driven AI Testing evaluates:

- Transparency of decision logic

- Availability of audit trails

- Documentation of training datasets

- Regulatory alignment

- Human oversight capabilities

If an AI system denies a loan application, the organization must explain why.

Explainability is not just technical. It is legal.

AI testing frameworks must confirm that:

- Outputs can be interpreted.

- Decisions can be traced.

- Documentation is accessible.

- Oversight workflows are active.

NKKTech Global supports enterprises in aligning AI system evaluation protocols with regulatory requirements across APAC, Europe, and North America.

Compliance-ready AI systems inspire trust.

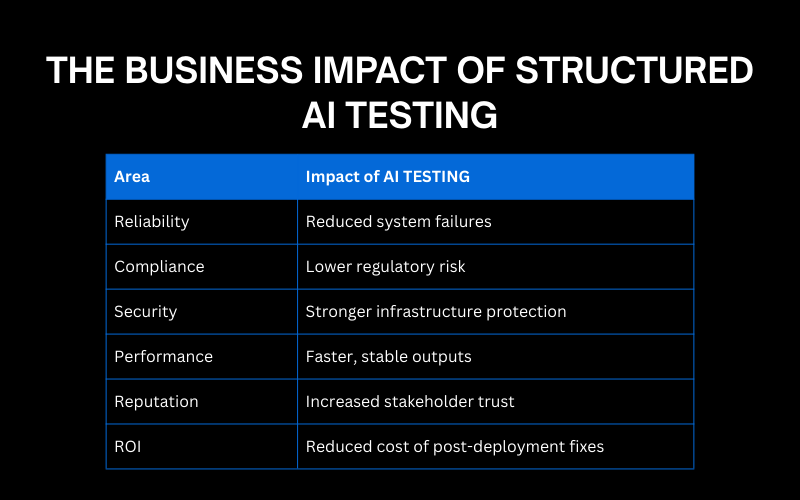

The Business Impact of Structured AI Testing

Organizations that invest in rigorous AI testing experience measurable advantages:

| Area | Impact |

| Reliability | Reduced system failures |

| Compliance | Lower regulatory risk |

| Security | Stronger infrastructure protection |

| Performance | Faster, stable outputs |

| Reputation | Increased stakeholder trust |

| ROI | Reduced cost of post-deployment fixes |

AI testing framework is not a cost center. It is risk mitigation and performance optimization.

Enterprises that skip structured AI evaluation often spend significantly more correcting failures later.

Common AI Testing Mistakes Enterprises Make

Even experienced teams fall into these traps:

- Treating AI Testing as a final step instead of a lifecycle process

- Ignoring real-world edge cases

- Failing to monitor post-launch performance

- Separating AI teams from compliance departments

- Overlooking security validation

AI complexity demands cross-functional collaboration.

Testing must involve:

- Data scientists

- DevOps engineers

- Security teams

- Legal advisors

- Business stakeholders

NKKTech Global builds integrated AI Testing governance models to ensure alignment across departments.

AI Testing as a Competitive Advantage

In 2025 and beyond, enterprise competition is not just about innovation speed. It is about innovation reliability.

Organizations that implement disciplined AI Testing:

- Deploy AI systems faster

- Gain regulatory confidence

- Reduce reputational risk

- Improve customer trust

- Scale more sustainably

AI adoption without testing creates fragility.

AI adoption with structured AI Testing creates resilience.

The Future of AI TESTING

Emerging trends in AI Testing include:

- Automated test generation using AI itself

- Real-time model health scoring

- Synthetic data testing environments

- Generative AI risk simulation

- Integrated compliance dashboards

As AI systems grow more complex, AI Testing will become even more sophisticated.

Enterprises that institutionalize AI Testing today will adapt faster to future technological shifts.

Final Thoughts

Artificial intelligence can transform enterprise performance — but only if it is reliable.

AI Testing is the discipline that transforms experimental AI into mission-critical infrastructure.

From functional validation and bias detection to performance stress tests and compliance audits, AI Testing must operate continuously across the system lifecycle.

If your organization is investing heavily in AI but lacks a structured testing framework, you are building growth on unstable ground.

Reliability is engineered. It is not assumed.

Strengthen Enterprise AI with NKKTech Global

At NKKTech Global, we design comprehensive AI Testing frameworks tailored for enterprise environments.

We help organizations:

- Implement end-to-end AI Testing pipelines

- Build automated bias and drift monitoring systems

- Conduct adversarial security validation

- Align AI systems with regulatory compliance

- Maintain continuous performance oversight

Our approach ensures your AI investments remain secure, scalable, and sustainable.

If your enterprise is ready to move from AI experimentation to reliable AI execution, we are ready to partner with you.

Contact NKKTech Global today to implement structured AI Testing that protects and powers your enterprise systems.

Contact Information:

🌎Website: https://nkk.com.vn

📩Email: contact@nkk.com.vn

💼LinkedIn: https://www.linkedin.com/company/nkktech